Dispatches from the Build

What I learned from building AI infrastructure sixteen hours a day for eight weeks

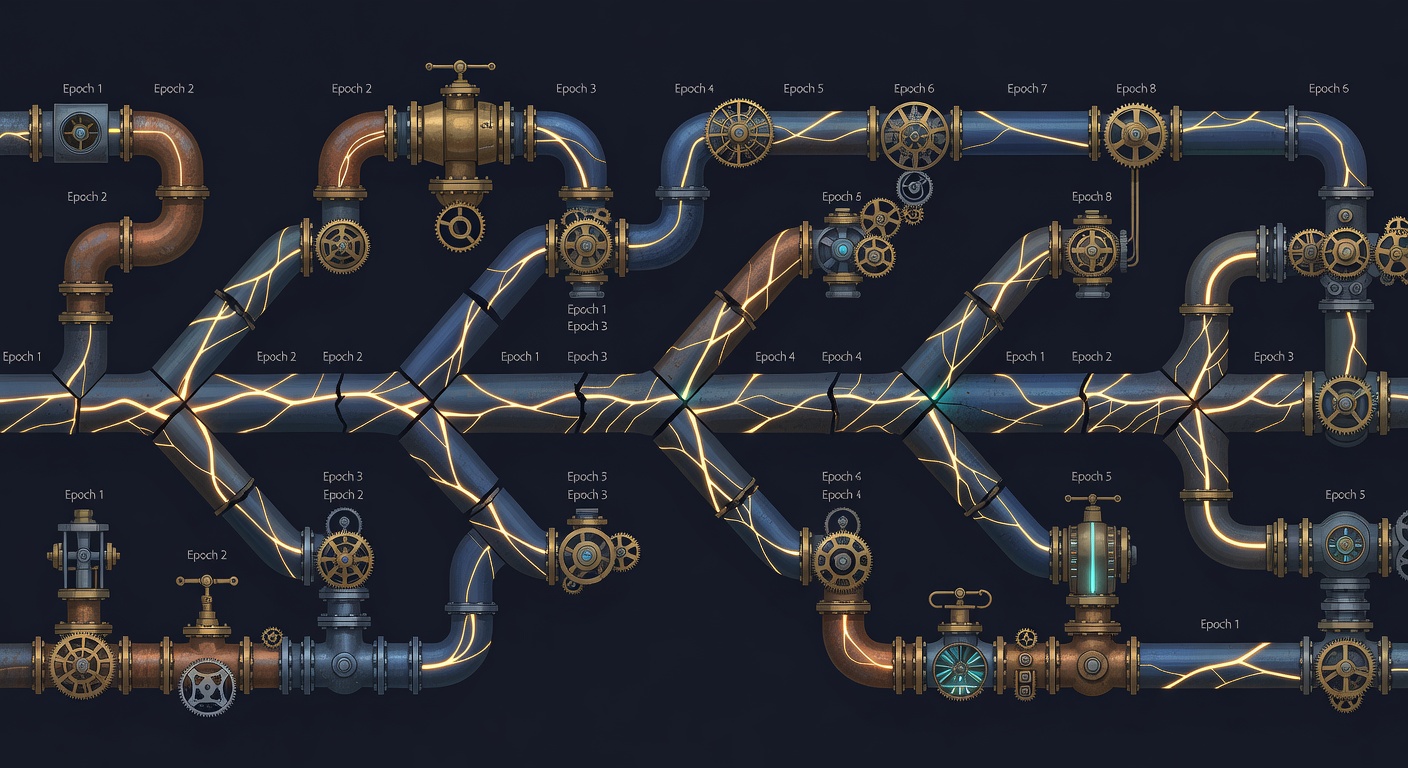

I built an AI infrastructure system over eight weeks. Over 800 commits. Sustained sixteen-hour days. Self-directed, no external pressure, no deadline except my own. The velocity was real. So was the risk.

This is a field report on what sustained the pace, what almost broke it, and what I learned about the gap between speed and sustainability.

What Made It Fast

The speed didn’t come from working hard. I’ve worked hard before — same hours, fraction of the output. The speed came from alignment.

Every system in the stack was designed around how my mind actually works: how it actually does, not how a productivity framework says it should. When the cognitive architecture examination I’d done weeks earlier translated into concrete system design, the friction vanished. I wasn’t translating between my thinking and my tools. The tools were the thinking.

The practical effect: decisions that used to take twenty minutes took seconds. “Should this feature work this way?” became “Does this preserve cognitive momentum?” — answerable immediately because the doctrine was explicit. One filter. Consistent results. The deliberation just… stopped.

The AI tooling amplified this. An agent that understood the project context, the architecture, the naming conventions could execute at the speed of specification. Describe what you want precisely, and it appears. The bottleneck moved entirely to knowing what to build next. Implementation became a near-constant. The variable was clarity of intent.

Sustained velocity compounds. Day one, you’re learning the system. Day five, you’ve built enough context that each new piece slots in faster. Day twenty, you’re making decisions from pattern recognition, not analysis. The system teaches you about itself through the act of building it. The longer you sustain the pace, the faster you get.

Up to a point.

The Treadmill Trap

Speed improvements create expectation ratchets. You speed up, your own expectations recalibrate (not anyone else’s; yours), and you’re running just as hard at higher altitude. What felt like extraordinary velocity last week is baseline this week. The dopamine of shipping fast fades. What remains is the expectation that you’ll continue shipping fast.

I noticed this around week three. The output was objectively impressive. But the feeling was “this is what we do now” rather than “look what we accomplished.” The system had absorbed the speed gain and normalized it. The natural response was to push for more speed, and that was the one I had to actively resist.

This is the treadmill. The system will happily consume every hour you give it. There’s always another feature that could ship, another improvement that’s clearly valuable, another thread to pull. The work is engaging in a way that makes it hard to stop. This isn’t drudgery-driven overwork. It’s the more dangerous kind: passion-driven overwork where the thing consuming your time is something you actually care about.

The critical moment is before the ratchet locks. Deciding consciously that the system is fast enough, then holding that speed while converting the surplus into life outside the build. Nobody else makes this decision for you — especially not when you’re working alone on something you love.

I caught it. Barely. But I caught it because I’d already mapped this failure mode during the cognitive architecture work. “The gravitational pull”: the thing where engaging work creates its own gravity field and pulls everything else into orbit around it. Not burnout. Something quieter: the slow atrophy of everything that isn’t the work.

Decisions Per Day

Output can’t be your sustainability metric. The baseline keeps shifting; what counts as “a lot” on day one is normal by day twenty. Hours can’t be the metric either, because the whole point of AI tooling is decoupling time from output.

The metric that actually holds is decisions per day.

The irreducible human contribution in an AI-augmented workflow is judgment: what to build, whether the output matches intent, which architectural approach, approve or reject. These are the decisions that compound into quality, and they have a natural ceiling.

Past a certain number of decisions, quality degrades. Pattern recognition gets fuzzy. You start approving things you’d normally question because the judgment budget is depleted. And that degradation is invisible in the moment; you feel productive, you’re still shipping, but the work has gotten subtly worse.

I don’t know the exact number. It varies by day, by complexity, by how much sleep I got. But I know the feeling when I’ve crossed it. Decisions start feeling effortful rather than automatic. I re-read the same diff three times without absorbing it. The gap between “I checked this” and “I actually evaluated this” quietly opens up.

The system should optimize for decision quality, not throughput. Build infrastructure that reduces the number of decisions required: good defaults, clear conventions, automated validation. So the decisions you do make are the ones that matter.

Two Kinds of Tired

There are two distinct fatigue patterns in agentic work, and they feel completely different.

What Sustained It

Looking back at eight weeks, the things that kept the pace sustainable weren’t what I expected.

Alignment was load-bearing. The cognitive architecture work I’d done before the build paid for itself a hundred times over. Every design decision was pre-filtered. No deliberation over preferences. No “what should I build?” paralysis. The doctrine answered those questions before they arose.

The AI wasn’t a productivity multiplier; it was a cognitive amplifier. The system didn’t make me produce more code per hour. It made me express more judgment per hour. The throughput that mattered wasn’t lines of code; it was decisions compressed into a smaller time window: architectural choices, feature priorities, quality calls. The AI absorbed the implementation. I supplied the intent.

Micro-closure events prevented drift. Small stabilization moments kept the work legible: end-of-day summaries, thread consolidation, explicit “here’s where we are” anchors. Without them, the velocity would have produced a blur. Every morning started with clear context instead of a cold-start problem. The restart cost stayed near zero.

The specification habit prevented waste. By week two, I’d internalized the pattern: describe how you’d test it before asking the AI to build it. This single habit probably saved more time than any tool or technique. The builds that went sideways were consistently the ones where I skipped this step.

What Almost Broke

The identity trap. When the work is going well and the output is impressive, it’s easy to fuse your identity with the velocity. “I am someone who ships at this pace.” That fusion is dangerous because it makes slowing down feel like failure rather than adjustment. I caught myself framing rest as weakness, which is ironically exactly the kind of identity-driven design error the cognitive architecture methodology is designed to surface.

Context decay across weeks. The first four weeks, everything fit in my head. Weeks five through eight, the system was complex enough that I couldn’t hold the full state. The notes, the architecture docs, the decision logs; they stopped being nice-to-haves somewhere around week six. They were the difference between “I know where I am” and “I’m lost in my own project.”

Quality drift under momentum. The faster I moved, the more tempting it became to skip validation. “It works, ship it.” But “works” and “works correctly” are different things, and the gap between them grows when you’re not checking carefully. The automated validation pipeline caught things I would have missed. Without it, the velocity would have produced a fragile system.

The Takeaway

The constraint has moved. It’s not “can I build fast?” anymore — the tooling answers that. The question now is whether I can sustain quality while building fast. And that question doesn’t have a tool answer. It has a self-knowledge answer.

I kept a note in my workflow doc that I rewrote about four times over eight weeks: define “enough” before you start, not after you’re exhausted. By week six it was muscle memory. By week eight it was the only reason the system was still coherent.

The people who sustain high-velocity output over months aren’t the ones logging the most hours. They’re the ones who’ve mapped their own failure modes clearly enough to design around them — who know the exact feeling of crossing their decision ceiling, who’ve already built the stopping point into the system before the momentum makes stopping feel like defeat.

The compound interest of that kind of sustainability, the thing you build over eighteen months when you don’t burn out at week ten, dwarfs anything a sprint produces. I’ve seen both. The sprint is more legible. The sustained build is more real.