How Your Mind Actually Works

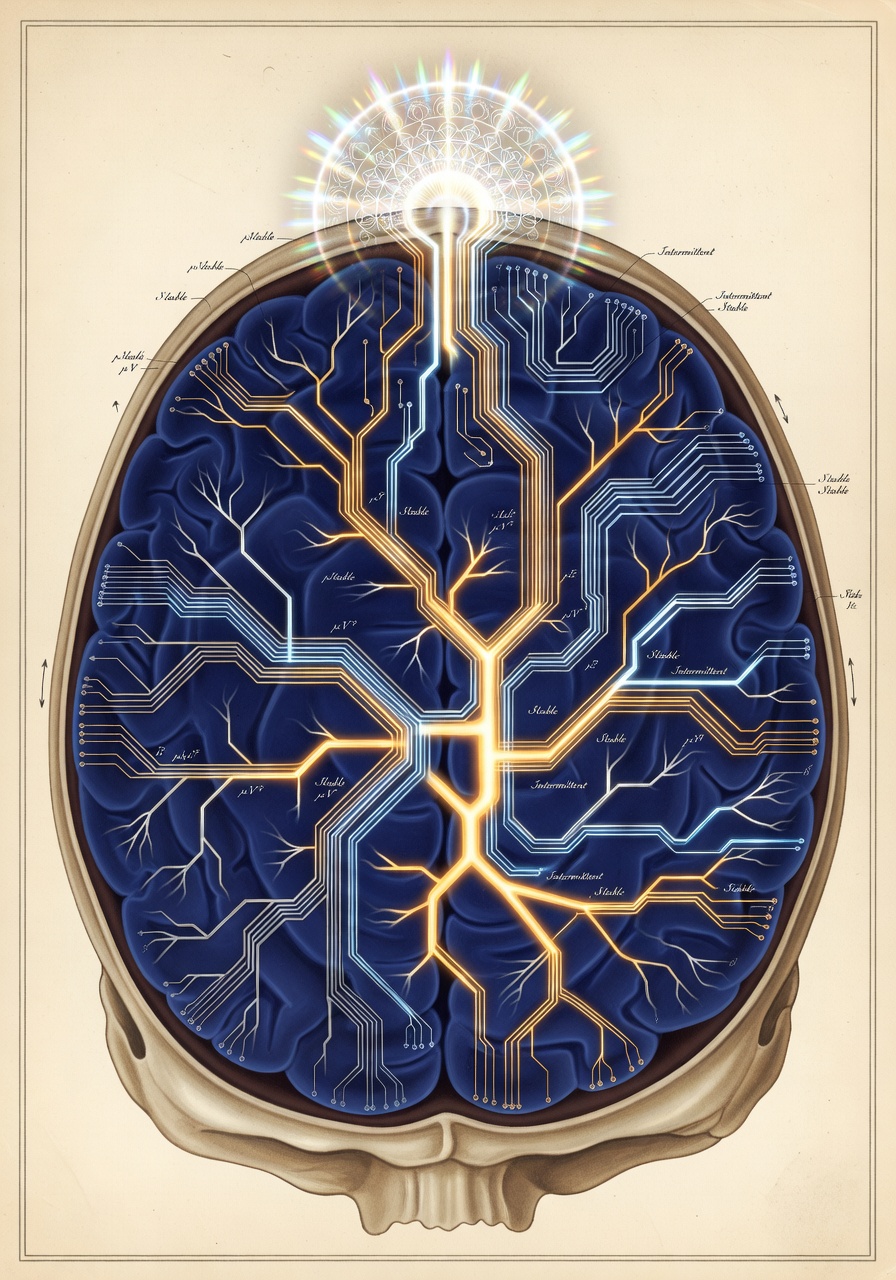

A framework for discovering cognitive operating characteristics and designing systems that fit

I spent the first weeks of a focused build sprint creating personal software and kept hitting the same wall — everything worked but nothing felt right. Features shipped. Systems ran. And I kept second-guessing every design decision because there was no governing principle. Just preferences stacked on preferences.

The breakthrough wasn’t a feature. It was realizing I’d been designing blind.

I was treating myself as a black box labeled “user” and trying to satisfy expressed preferences. Build a note system. Make it searchable. Add tags. All reasonable. All arbitrary. Because I never asked the foundational question: how does my mind actually work?

Once I asked that — once I mapped my actual cognitive operating characteristics instead of my aspirational ones — everything snapped into focus. The arbitrary features stopped being arbitrary. They became testable against a doctrine derived from observed behavior. “Does this preserve cognitive momentum?” became the filter. Features that passed shipped. Features that failed got cut regardless of how much I “wanted” them.

That’s the methodology: build systems that align with how a specific mind actually operates, not just better systems in the abstract.

And this isn’t just personal software. Any system where human cognition is load-bearing (teams, products, organizations, teaching) runs on the same logic: discover operating characteristics, extract doctrine, align design with doctrine.

The Bigger Picture

Before the methodology: cognitive fit is not the only reason people struggle with AI tools, and it’s not even the most obvious one.

People are resistant, sometimes for good reasons. They’re scared of replacement, skeptical of hype, exhausted by the pace of change. Institutional friction compounds: teams that don’t trust the tools, managers who don’t understand the workflow shift, organizations that want AI transformation without changing anything about how they operate. These are real barriers. They matter.

And then there’s the imagination gap. Some people can’t stop generating ideas; they see a new tool and immediately envision twenty things to build with it. Others look at the same tool and don’t see the point. That gap isn’t a cognitive deficiency. It’s a difference in orientation that no framework can bridge on its own.

What I’m describing here is the barrier that hides beneath the others. You can overcome fear, push through skepticism, find the right tools — and still fail, because the workflow you adopted fights how your brain actually moves. That failure is invisible because it feels like a personal shortcoming rather than a design mismatch.

Which makes it the barrier worth examining. Not the only one. The hardest to see.

The Problem

Most design starts with “what do you want?” which is the wrong question entirely.

You sit down to design a system and you ask for requirements. What features? What workflows? What should it do? And you get answers. All reasonable, all plausible — and completely disconnected from how the person’s brain actually moves through space.

Someone says “I want to be more organized” so you build them a rigid filing system and watch it collapse within a week because their cognition is associative and forcing taxonomies breaks momentum. They didn’t lie about wanting organization. They just didn’t know their operating characteristics well enough to recognize that “organized” for them means “fast context reconstruction” not “clean categories.”

Even the sophisticated approaches miss it:

User research asks what people want, not how their minds actually move. It surfaces preferences, not physics.

Personality typing slaps categorical labels on people and calls it insight. But two people with the same type can have completely different cognitive architectures. Categories don’t predict operational behavior.

Requirements gathering documents desired outcomes. All downstream from unexplored assumptions about how the operator actually functions.

Productivity frameworks optimize for throughput without asking what makes throughput sustainable for this specific system. Getting Things Done works brilliantly if your cognition matches the creator’s. If it doesn’t, you’re fighting your own brain to follow someone else’s physics.

The problem is that we treat minds like preference-generators instead of physical systems with operating characteristics.

You don’t just ask an engine “what do you want?” and build to spec. You measure how it actually behaves under load. What burns fuel efficiently. What causes catastrophic failure. What trade-offs are acceptable.

Minds have physics too. Energy dynamics. Failure modes. Load-bearing characteristics. And most design processes never surface them.

The Methodology

Five phases. The output isn’t a personality type or a diagnostic label. It’s a set of operating characteristics and governing principles specific to an individual or team.

You can do this self-guided. You can facilitate it for someone else. You can use an AI to run the examination. The process is the same.

- Phase 1 Systems Mapping

- Phase 2 Pattern Extraction

- Phase 3 Failure Mode Analysis

- Phase 4 Doctrine Derivation

- Phase 5 System Alignment

Phase 1: Systems Mapping

You’re asking questions that surface how the cognitive system behaves, not how it should behave. No aspirations, no therapy. You’re mapping behavioral physics.

The questions are structured around cognitive systems. Each one has observable operating characteristics:

The output of Phase 1 is raw data. Detailed answers about how each system actually behaves. Resist the urge to abstract too early. You’re gathering observations, not conclusions yet.

Phase 2: Pattern Extraction

Now you extract behavioral physics from the raw data: observable patterns that predict system behavior. Labels and diagnoses aren’t the goal.

The difference matters:

The test: can you predict system behavior from the pattern? If you can’t derive a design decision from it, it’s not deep enough yet.

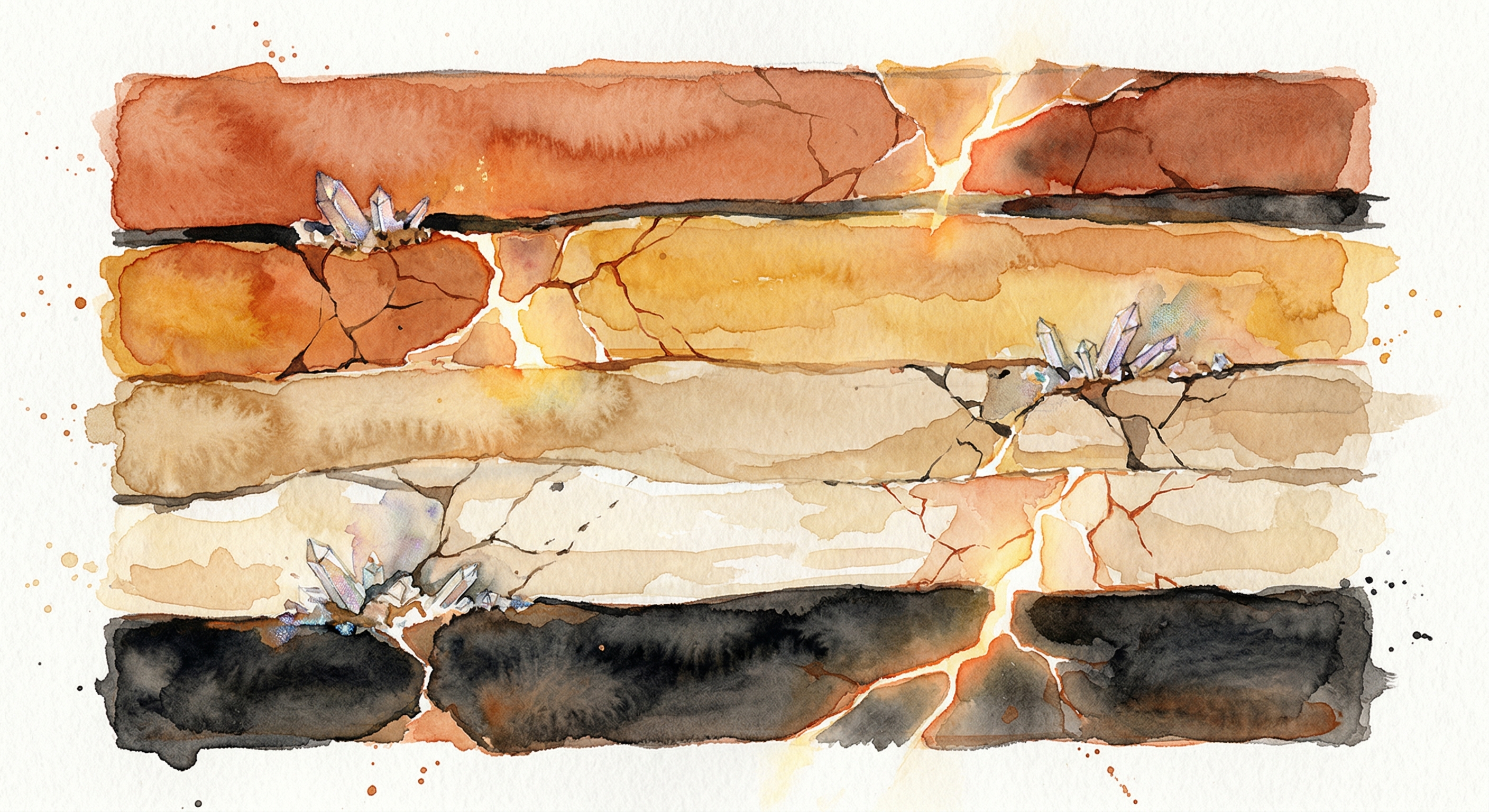

Phase 3: Failure Mode Analysis

Not all failures are equal. Some degrade the system catastrophically. Others are acceptable noise. The difference matters because most “best practices” eliminate acceptable failures by introducing catastrophic ones.

Catastrophic

- Momentum fragmentation

- Flow state disruption

- Forced premature convergence

- Identity threat from misaligned systems

Acceptable

- Disorganization

- Redundancy

- Ambiguity

- Incompletion

Clean desk policy? Eliminates visible mess (acceptable) by forcing premature organization (catastrophic for people who think by spreading work spatially). Single source of truth? Eliminates redundancy (acceptable) by creating devastating restart penalty when that source becomes unavailable. Strict filing hierarchies? Eliminates ambiguity (acceptable) by breaking associative retrieval.

You have to know which failures you can tolerate and which ones will kill you.

Phase 4: Doctrine Derivation

From patterns and failure modes, you derive governing principles. These are laws derived from observed behavior, not aspirations dressed up as rules.

The goal is a primary directive: a single principle that governs system design. This becomes the filter every decision passes through.

Examples: “Preserve and extend cognitive momentum.” “Minimize identity threat in all operations.” “Optimize for context reconstruction speed.” “Protect creative incubation states.”

Your primary directive should be descriptive (derived from observation, not aspiration) and testable: you need to be able to evaluate whether a decision actually violates it. It should be generative (producing design implications, not just constraints) and singular. One core principle. Not a list that you then have to adjudicate between.

Accompany the directive with a meta-rule: a question that tests alignment. “Does this preserve or degrade cognitive momentum?” “Does this make context reconstruction faster or slower?” That question becomes your decision filter. Run everything through it: features, workflows, tooling choices, all of it.

Phase 5: System Alignment

Now you apply the doctrine to every system decision.

For each existing or planned feature, ask: Does this align with the primary directive? Does it protect against catastrophic failures? Does it tolerate acceptable failures?

What happens next is clarifying. Features that felt arbitrary become necessary, or get cut entirely. “Best practices” that violate the doctrine get rejected regardless of how popular they are. New features emerge naturally from the doctrine that you never would have specified as requirements.

None of these emerge from preferences. They cascade from applying the doctrine to observed operating characteristics. And they’re load-bearing — remove any one and the system stops working for the person it was designed for, because they’re aligned, not because they’re “good features.”

Proof Points

The Personal Cascade

I built a cognitive workspace using this methodology. Started as “collection of features I think I want.” After one design pass, it became “necessary architecture.”

Five core features that felt like preferences became non-negotiable once the cognitive architecture was explicit: soft focus (dim unrelated work instead of hiding it), warm threads (surface recent context instead of archiving), high-recall search (broad associative retrieval over narrow precision), capture without taxonomy (never force categorization at point of entry), and micro-closure events (small stabilization moments that preserve progress visibility without forcing completion).

Features that violated the doctrine got rejected regardless of how popular they were. Daily task lists? Out: they optimize for completion detection; I detect momentum. Strict project boundaries? Out: they fragment context; my cognition needs soft boundaries.

The result: a system that feels like an extension of thought rather than a tool requiring translation. “Better” is beside the point. It’s aligned.

The Facilitation Proof Point

Five hours of conversation across two sessions. Never prescribed a solution. Just asked questions that surfaced how someone actually worked, the gap between that and how they thought they should work doing all the explaining on its own.

They’d been forcing themselves into a borrowed framework that looked right on paper. The friction was constant. They thought the problem was them. They were already thinking about their work in a sophisticated, effective way. They just couldn’t see the pattern because they were describing it in someone else’s language. Once the methodology surfaced the actual operating characteristic, made it explicit and named it, the right approach became obvious to them without anyone prescribing it.

They independently restructured their entire process afterward.

Help people recognize what they already know. Then get out of the way and let them run with it.

Where This Sits in the Literature

This methodology didn’t emerge from academia. It emerged from building software, hitting a wall, and eventually asking why features I was convinced I needed kept failing on contact with actual use. But the principles beneath it have been studied for decades across multiple fields.

Cognitive Work Analysis (Vicente, Rasmussen) systematically analyzes cognitive demands to inform system design, originally for safety-critical domains. The principle that understanding cognitive constraints enables better design is well established.

Distributed Cognition (Hutchins) demonstrates that cognition extends beyond the brain into tools and environment. Designing external systems as cognitive extensions has been a research program since the 1990s.

Cognitive Fit Theory (Vessey) provides empirical evidence that matching tools and representations to cognitive patterns improves performance.

Personal Construct Psychology (Kelly) offers methods for eliciting individual-specific frameworks through structured questioning. Direct precedent for the examination process described here.

What this methodology adds is a specific integration: self-applied cognitive work analysis, with individually-derived constitutional doctrine, incorporating shame and identity dynamics as design constraints. The components exist in the literature. Applying them as a self-directed design methodology for personal systems is less explored territory.

The Transferable Insight

The pattern: discover operating characteristics, extract doctrine, align system design with doctrine.

It applies anywhere human cognition is load-bearing. Team design means asking what the collective cognitive operating characteristics actually are. Product design means discovering your users’ cognitive architecture, not just cataloguing their preferences. Organizational design means tracing how information actually flows and identifying which failures fragment institutional knowledge.

The methodology is a recognition engine. The system was already implicit in how they work. You’re just making it visible.