When Systems Fix Themselves

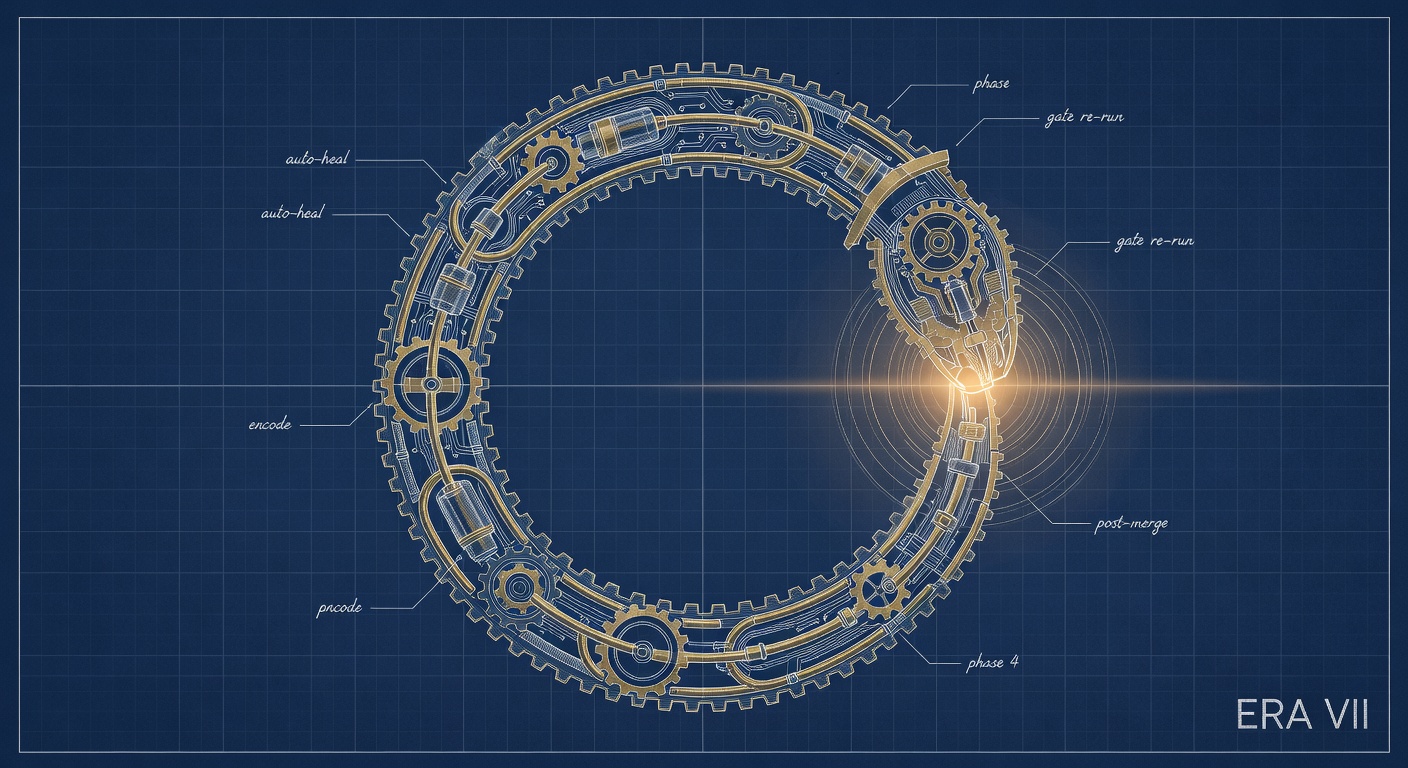

Building an auto-healing architecture by composing the patterns that couldn't do it alone

There’s a design pattern book I kept returning to during the Observatory build. Antonio Gulli’s expansion of Andrew Ng’s foundational agentic patterns into a full taxonomy. Twenty-one patterns in a Springer volume. Reflection. Exception handling. Human-in-the-loop. Each gets its own chapter, its own diagram, its own worked example. And each makes perfect sense until you need more than one at a time.

The book doesn’t show you what happens when the problem you’re solving won’t fit inside a single pattern. That’s what I ran into building Observatory’s auto-healing loop.

The Problem That Made It Necessary

Observatory is an AI dispatch system. Agents receive work packages (code changes, infrastructure modifications, targeted interventions across a codebase) and execute them autonomously. Each work package is a campaign. Campaigns run, agents commit, agents merge, and the system moves forward.

In theory.

In practice, things went wrong in ways that were predictable in retrospect and invisible in the moment. Merge conflicts. Constraints violated because the repository had drifted since the campaign was designed. Code that technically merged but broke something adjacent. And the quietest failure: the same mistakes showing up again in later campaigns, because nothing in the system remembered what went wrong last time.

My response was to fix them by hand. Every time. Find the conflict, resolve it. Catch the constraint violation, patch the work package. Review the merged code, catch the regression. Every failure routed through me, every fix evaporated when the conversation ended.

That’s not a system. That’s a person working very fast.

What the Patterns Said (and Didn’t Say)

Each failure mode pointed at a recognizable pattern from the literature.

Merge conflicts pointed at exception handling: detect, attempt recovery, escalate if recovery fails. Pre-dispatch violations were clearly reflection, examining current state against constraints before acting. Post-merge quality issues? Also reflection, but applied after the fact. The human time consumed by every failure mapped to human-in-the-loop, routing only the decisions that actually need a person.

And repeated failures? That one didn’t map to any single pattern. It pointed somewhere the taxonomy doesn’t go.

The problem is that exception handling handles the conflict but produces no learning. Reflection catches the problem but doesn’t fix the root cause. And human-in-the-loop, by definition, keeps humans in the critical path forever. None of them close the loop on their own. A loop that doesn’t close doesn’t heal; it just fails more slowly.

What I needed was a structure where each pattern handed off to the next. Where a failure triggered not just recovery but the conditions for future immunity. That’s not a pattern. That’s architecture.

Five Phases, Five Campaigns

The architecture didn’t arrive whole. It grew one phase at a time, each proving itself before the next got built. Five phases, five campaigns.

The Novel Piece

Phases zero through three are about recovery. Getting the system back to working after something breaks. Phase four is different. Phase four is about immunity.

Most resilience engineering stops at recovery. Detect the problem, respond, restore function. That’s valuable, but it means you’re solving the same problems forever. Immunity is harder. It requires the system to look at its own failure history and change its behavior going forward.

What Phase 4 actually does is give agents a kind of institutional memory. The agents themselves don’t persist between campaigns; each one starts fresh. But the organization remembers. The next agent that walks in gets a briefing: here are the failure patterns this codebase has seen, here’s what caused them, here’s what to watch for.

This is what’s missing from the standard pattern taxonomy. Reflection looks backward at one action. Encoding looks backward at a history and compresses it into guidance that faces forward. It’s closer to organizational learning than to any individual cognitive pattern, and it only works when the other phases are already running. The encoding needs failures to learn from. The other phases make sure those failures get caught and resolved instead of piling up silently.

What the Loop Actually Looks Like Closed

When all five phases are running, the system behaves differently. Not incrementally better — differently.

A campaign executes. Before dispatch, constraints are validated. If they fail, the campaign gets corrected before it ever runs. During execution, conflicts get resolved autonomously. After merge, a review agent examines what actually landed. Problem surfaces? Correction fires immediately. And after resolution, the incident is encoded: what happened, why, what to watch for next time.

Here’s where it gets interesting. The next similar campaign arrives with that encoding baked in. Pre-dispatch validation now knows to check for the specific constraint that drifted last time. The merge agent has seen this conflict pattern before. The review agent knows what a regression looks like in this particular corner of the codebase. The system got smarter about its own weak spots.

My role in this loop is not to execute any of these steps. It’s to watch for boundary cases: a failure nobody’s seen before, an ambiguity that exceeds what autonomous resolution can handle, an encoding that needs judgment rather than mechanics. I went from being in the operational path to being in the supervisory path.

That sounds like a small distinction. It isn’t. Operational presence means every failure waits for you. Supervisory presence means the system runs, and you step in when it hits something that actually needs you. The difference is whether you can walk away from the keyboard.

Trust as an Engineering Constraint

The architecture didn’t arrive all at once. I wouldn’t have trusted it if it had.

Phase 0 had to prove itself — agents closing their own work without going wrong — before I’d let Phase 1 touch pre-dispatch validation. Phase 1 had to earn its keep before I was comfortable with Phase 2 taking autonomous action on merges. Each phase ran for at least one real campaign before the next got built on top of it.

This is the right sequencing. Each phase is a load-bearing layer for the ones above it. Autonomous resolution only works if detection is reliable underneath it. Encoding only works if the resolution layer produces resolvable incidents, not undiagnosed messes. Build Phase 4 on top of an unstable Phase 2 and you get confident encoding of bad resolutions. Institutional memory for the wrong lessons.

Trust is earned by watching, not by reading the design doc. The design might be correct. The implementation might match. But the only way to know the detection layer actually catches what it should is to watch it run on real campaigns, with real failures, in conditions you didn’t think of when you wrote the spec.

Incremental trust compounds. By the time I built Phase 4, I trusted the four layers below it — not because I believed in the design, but because I’d watched them work. I wasn’t stacking uncertainties. I was adding one new layer on a foundation I’d already pressure-tested.

If you’re building agentic architecture, the instinct is to design the whole thing and then build it. Resist that. Design the whole thing, build the foundation, let it prove itself, then extend. Your design doc is a roadmap. It’s not a deployment plan.

The Economics of Self-Repair

The five campaigns that built this architecture cost $27 in dispatch. That’s the actual execution where agents write and merge code. Each campaign ran under $8. But that’s not the whole picture.

Every campaign also had a planning phase: specification, constraint definition, review council rounds, fresh-eyes passes. I have reliable cost data for two of the five. C28’s full lifecycle ran about $21 (dispatch was $6.50 of that). C34’s ran $26-30 (dispatch was $6-10). The planning phases for the other three weren’t itemized, but they all went through multi-round review pipelines. Best guess for the full lifecycle across all five: somewhere in the $80-120 range.

That’s still cheap. A single merge conflict used to eat thirty minutes of my attention. A pre-dispatch failure that slipped through produced a confusing half-done state that took longer to diagnose than it would have taken to prevent. Post-merge regressions that wouldn’t surface for two more campaigns — those were the expensive ones. Now they get caught and encoded the same day.

The planning cost is front-loaded. As institutional knowledge builds, as the system encodes more failure patterns and the spec pipeline gets better at anticipating them, each successive campaign’s planning phase gets cheaper. The early campaigns required extensive review because the patterns were new. Later ones benefit from what the earlier ones learned.

The real asset is attention. The alternative to autonomous conflict resolution isn’t free; it’s thirty minutes of my time per conflict, plus the context-switching tax of dropping whatever I was actually doing. The economic case for auto-healing was never about API cost. It was about buying back the attention budget of the person running the system.

The encodings compound. Any single encoded failure is a small improvement. But six months of encodings? The system gets measurably smarter about the failure patterns that actually appear in this codebase — not the abstract ones from training data. That’s a different kind of value, and it only grows.

What Doesn’t Heal Itself

Here’s what the loop doesn’t cover.

Novel failures, stuff the system hasn’t seen before, still need a human to diagnose. The loop gets progressively better at known failure patterns. It does nothing for new ones until they’ve been through the cycle once.

Design failures are a bigger gap. If the work package is specified wrong, not executed wrong but conceived wrong, the auto-healing loop can’t help. Pre-dispatch validation checks constraints, but it can’t tell you whether the intent behind the campaign makes sense. That’s still specification work. Still human work.

And the loop itself can fail. Phase 2 has produced wrong merges when the ambiguity was beyond what it could handle. Phase 4 has encoded the wrong lesson when the diagnosis was incomplete. These aren’t hypothetical. They happened, and they needed human correction. The loop reduces how often I have to intervene and how much it costs when I do. It doesn’t make intervention unnecessary.

What Changes When the Loop Closes

Building this changed how I think about agentic systems.

Before the loop, every failure was an interruption. Something broke, I fixed it, I went back to what I was doing. Each one was its own discrete event. The system had no memory and no way to carry anything forward.

Now failures are inputs. Something breaks, the system recovers, the recovery generates an encoding, the encoding becomes context for next time. Still a failure, but also a data point that makes the same failure less likely to happen again.

That’s the deeper shift. Not a system that doesn’t fail, but a system that learns from failing. Given enough cycles, it converges toward its own stability — not because someone designed all the edge cases out, but because it encountered them and remembered.

The patterns that got me here — reflection, exception handling, human-in-the-loop — each did something essential. But none of them, on their own, produce a system that learns. The learning comes from the composition. From the handoffs between phases. From the fact that the last phase feeds information back into the first.

That’s what the design pattern books don’t cover. Not how each pattern works; they cover that fine. What happens when you wire them together. The capability that matters isn’t in any one chapter. It lives in the connections between them.